Immersive Extended Reality Experience

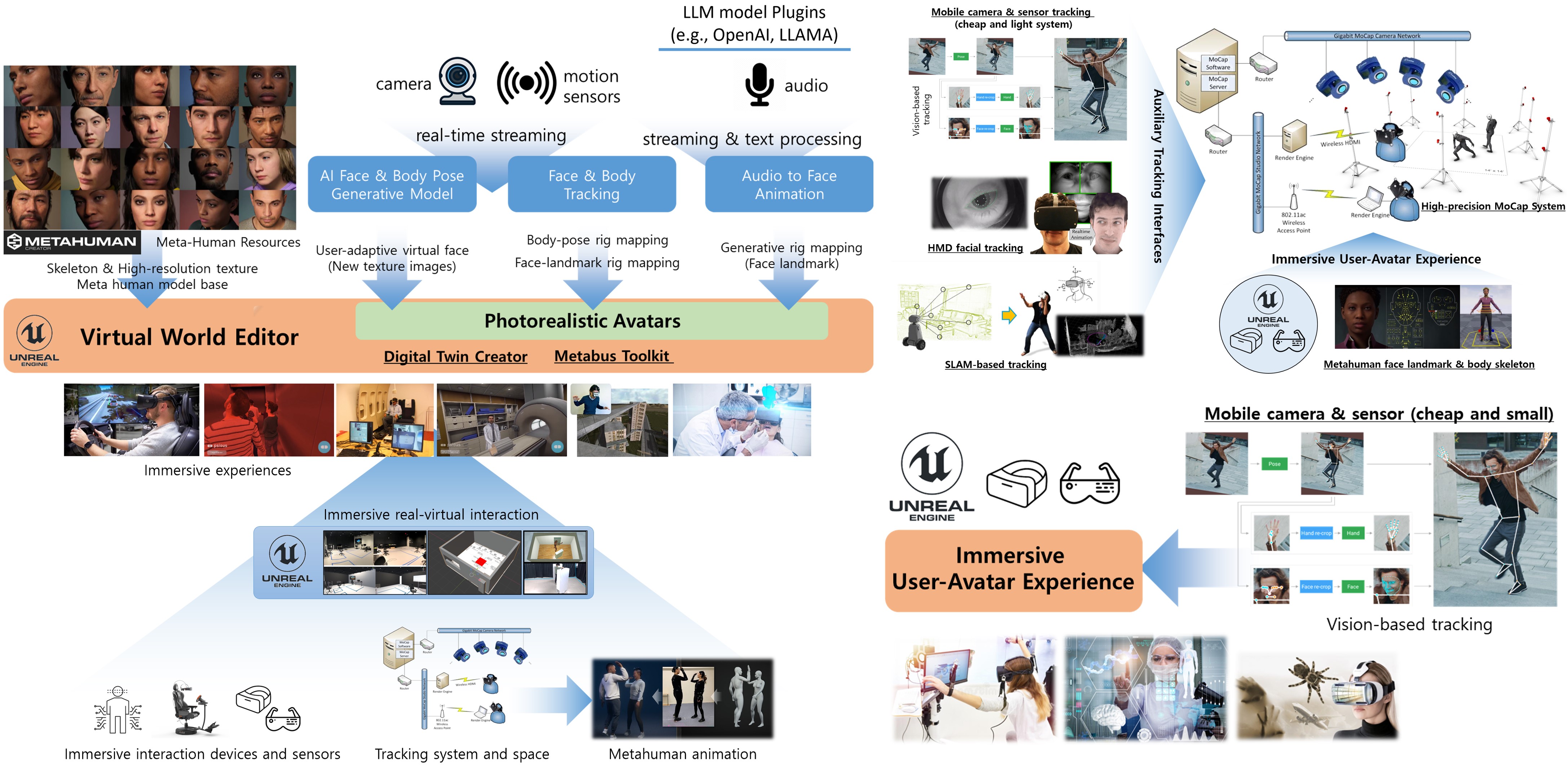

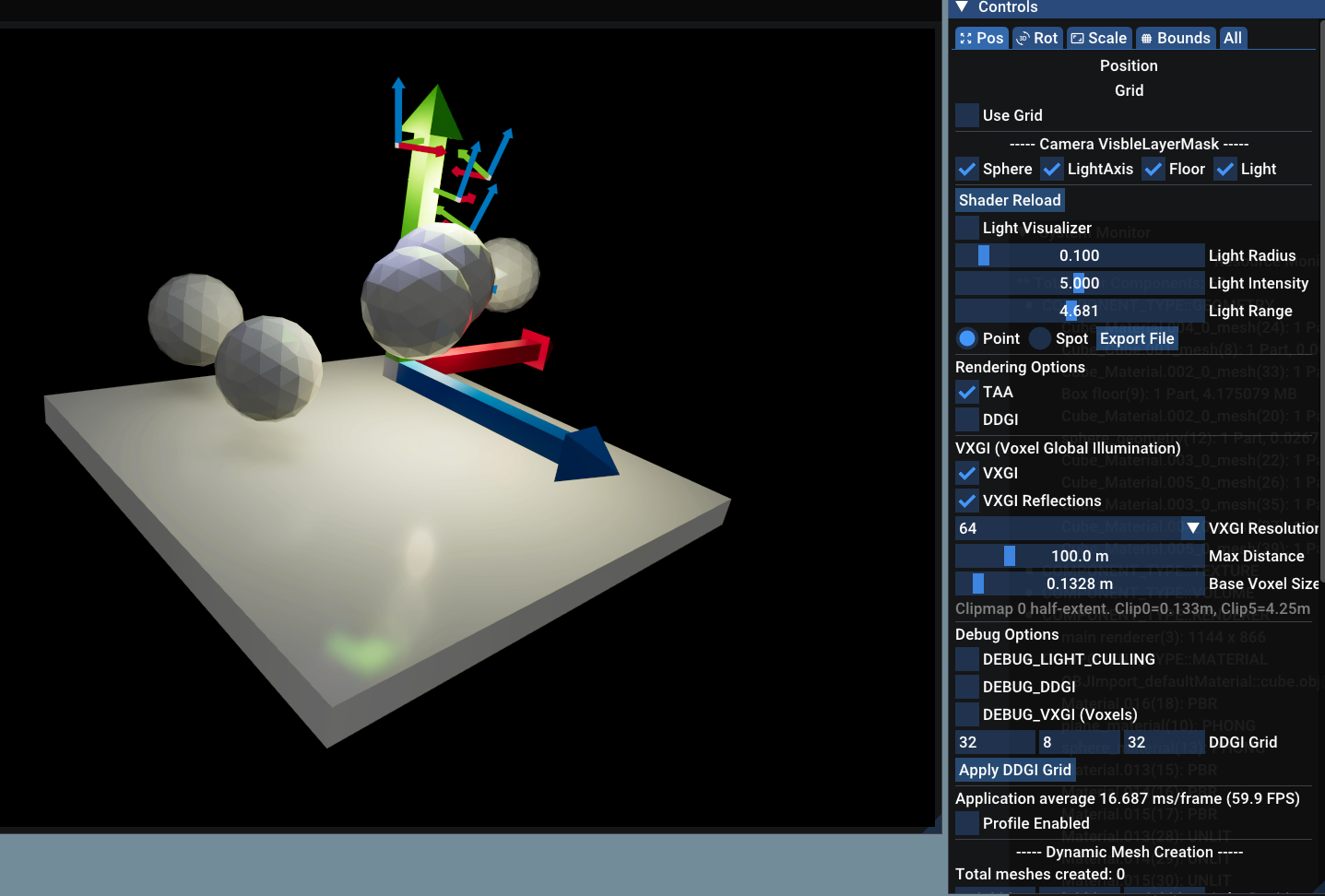

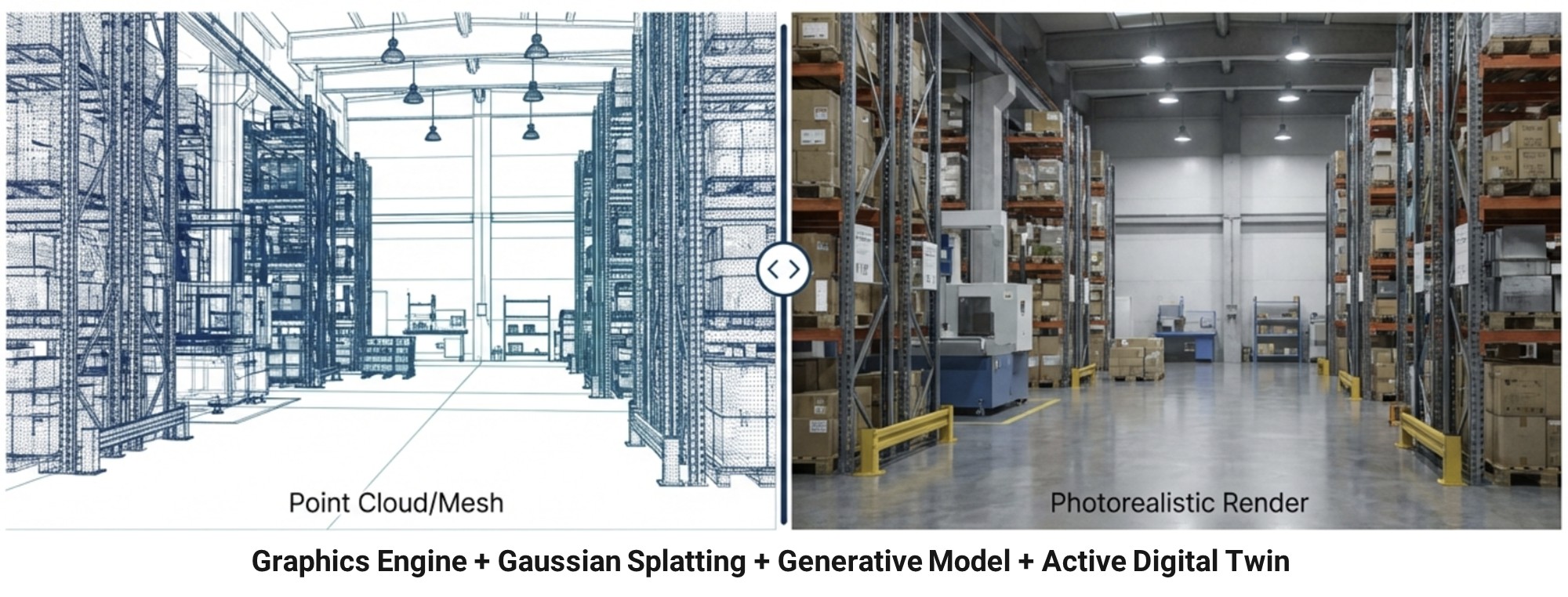

Building on our experience in computer graphics and computer vision, we develop immersive extended reality (XR) experiences that combine the latest Unreal Engine features with modern AI to deliver high-fidelity interactive content. Our focus is on bringing together hyper-realistic rendering, character animation, and AI-driven interaction so that users can engage with virtual environments in ways that feel responsive and believable.

Specifically, we are interested in:

- AI characters connected to LLMs — interactive virtual humans (e.g., Unreal Engine MetaHuman) whose dialogue, behavior, and reactions are driven by large language models, supporting natural, context-aware conversations and decision-making inside XR scenes.

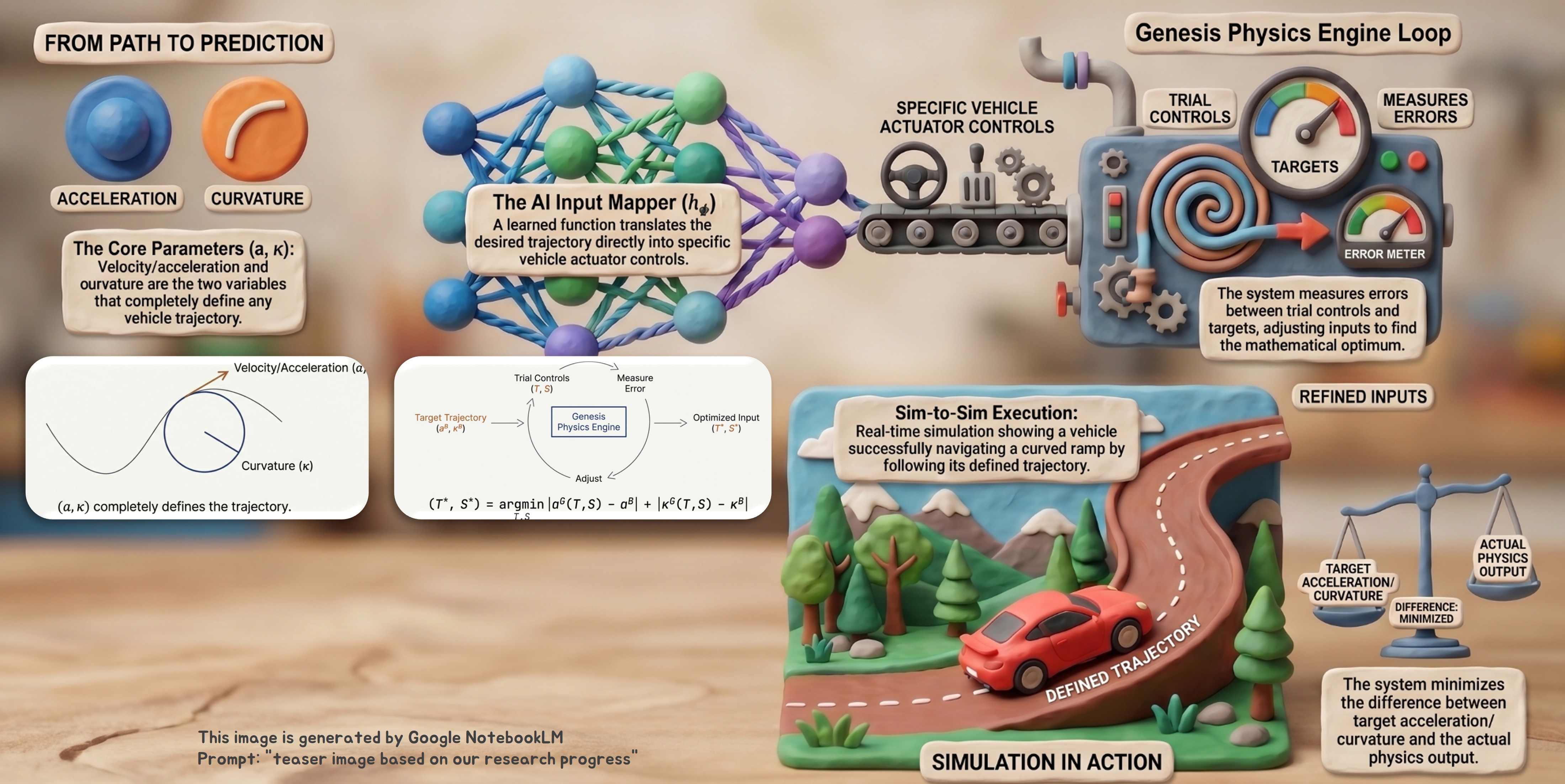

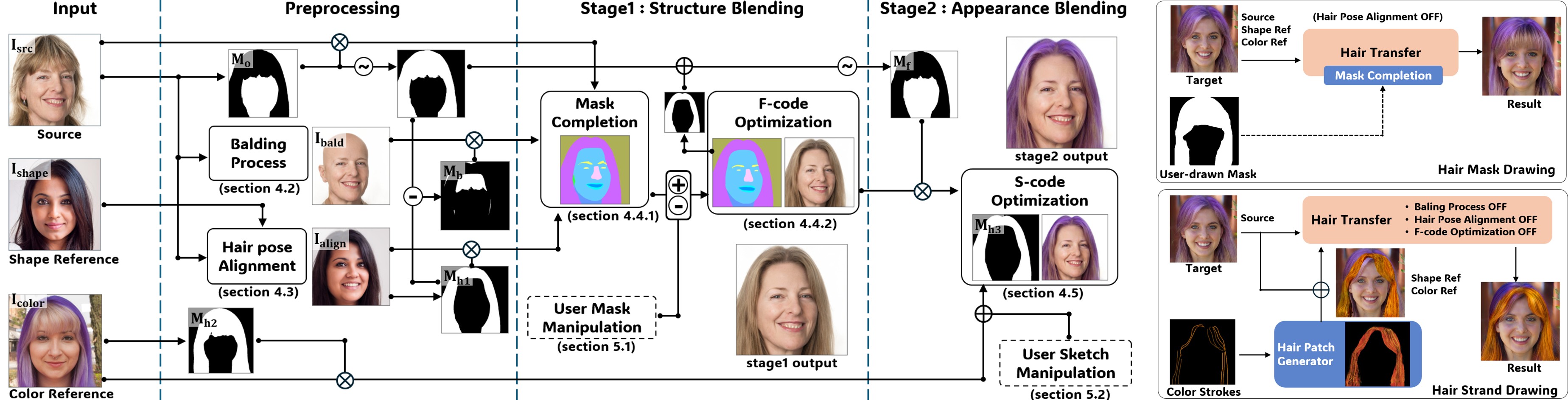

- Generative-model-powered XR content — applying modern image, video, and 3D generative models to author and adapt XR content on the fly, enabling diverse application cases that go beyond pre-authored assets.

- High-quality immersive plug-ins — efficient plug-ins on top of Unreal Engine’s hyper-realistic rendering pipeline and MetaHuman resources, designed to make these capabilities accessible to domain-specific applications.

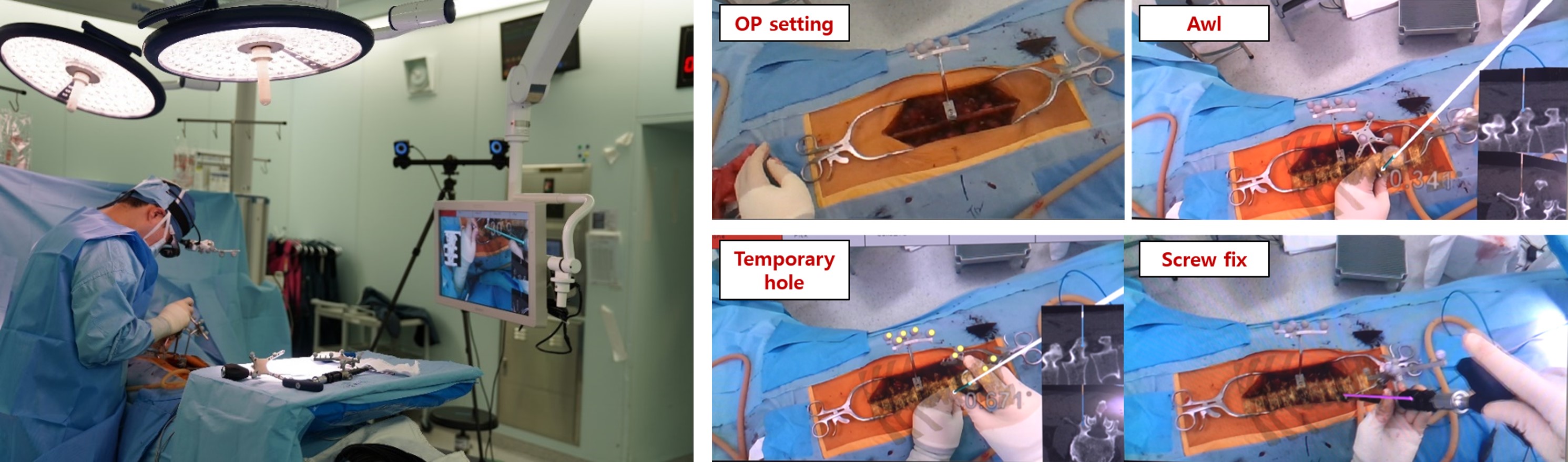

In an earlier collaboration, our laboratory worked on immersive exposure therapy content with Seoul National University Dental Hospital, Prof. HyunJeong Kim, applying immersive XR to clinical exposure-therapy scenarios.

programming experience

Unreal Engine C++/Blueprint, Python, LLM/agent frameworks