AR Navigation-based Surgical Guidance System

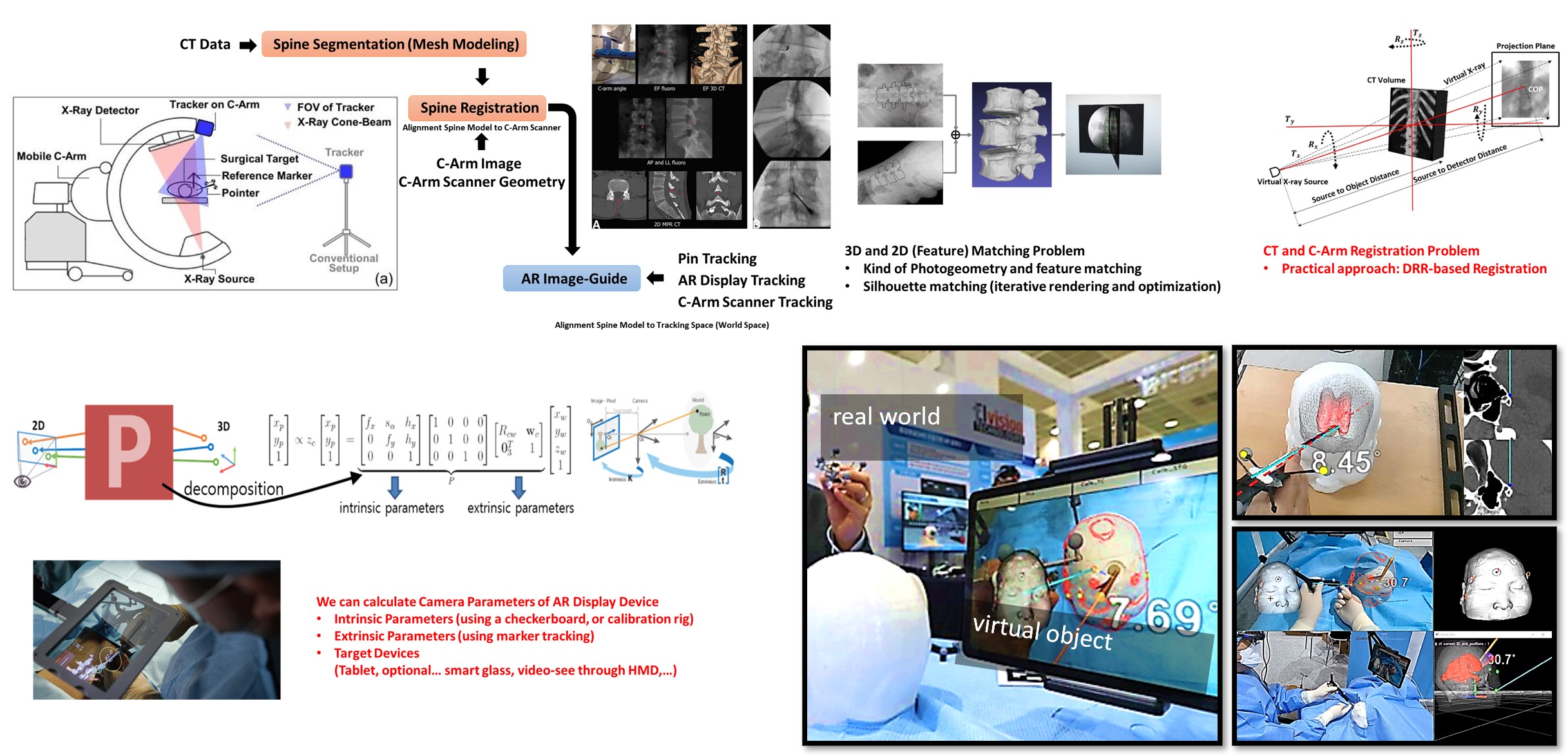

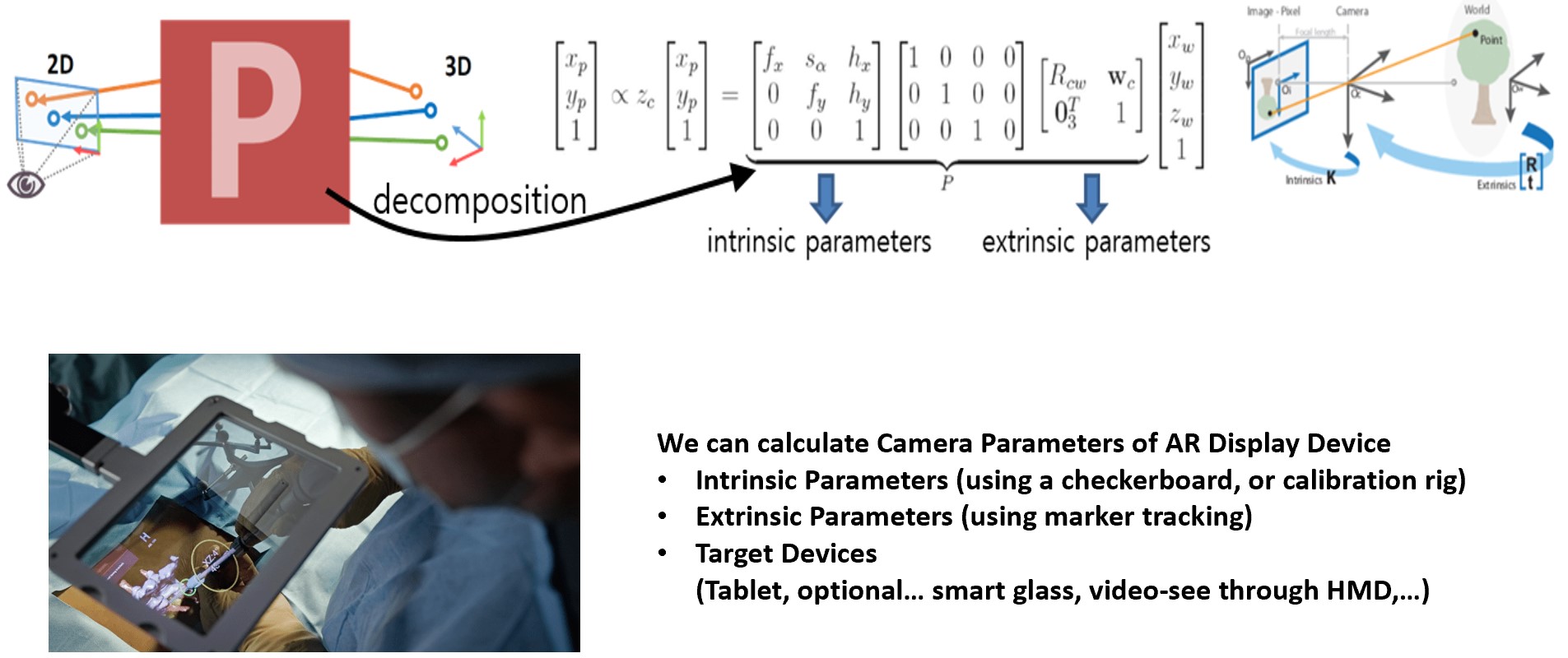

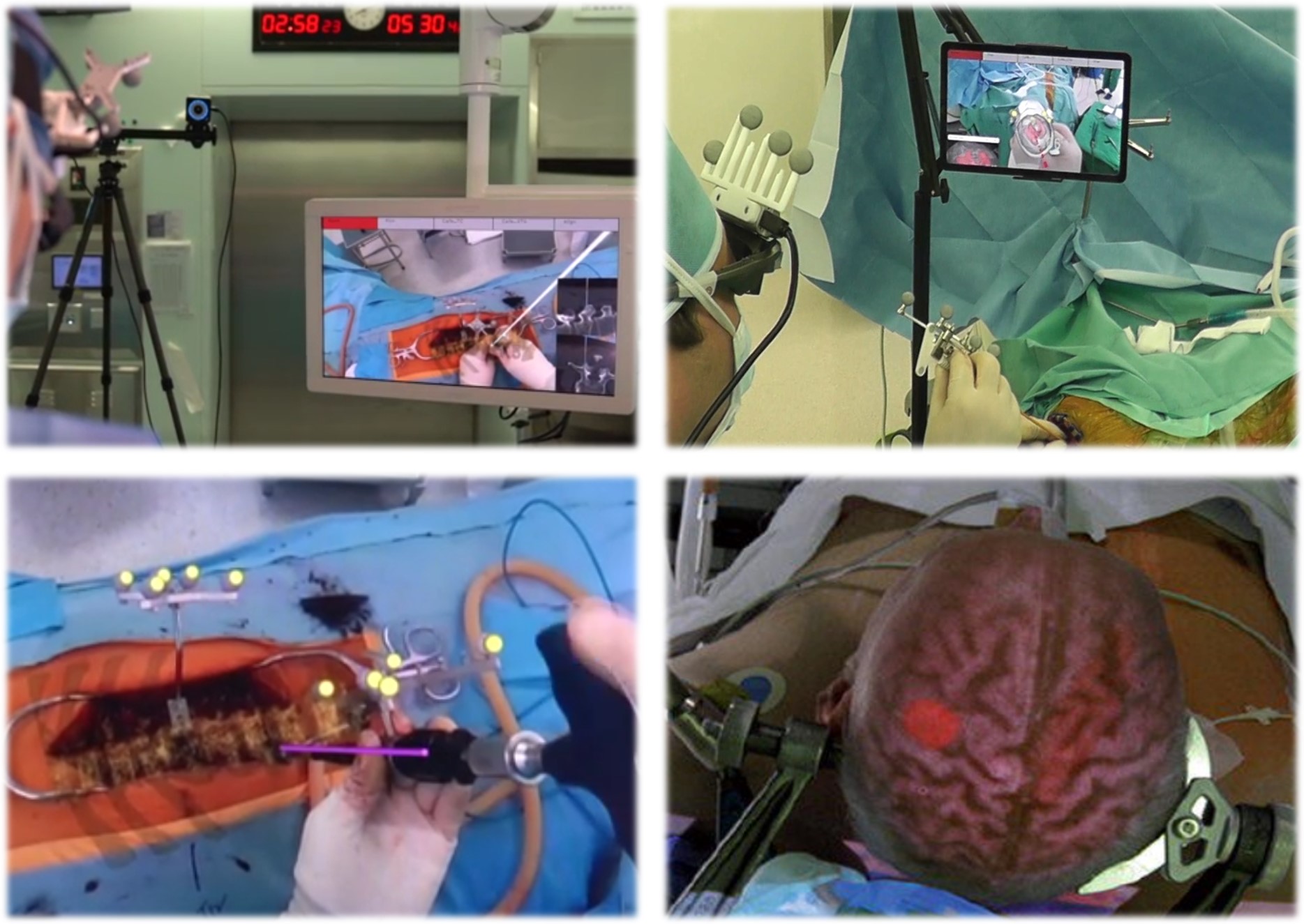

We have developed an AR navigation-based surgical guidance system that augments a surgeon’s view with intra-operative imaging and tool-tracking information. Building on our experience from a large-scale government-supported AR project — where we built efficient AR tracking and calibration pipelines that integrated scans from multiple devices into a single registered image — we extend this capability to the surgical setting, where precision, robustness, and intuitive visualization are critical.

The system targets simple surgical workflows in which the surgical area is captured by an X-ray C-arm and the resulting images are used to project surgical tools and auxiliary surgical information into the user’s view. The goal is to deliver an AR navigation image that gives the surgeon spatially registered guidance without disrupting the operative flow.

Within this project, we have been researching and developing the following challenging components:

- Operationally easy X-ray view calibration: Because continuous X-ray shooting is not allowed, we developed an automated, user-friendly calibration procedure tailored to the operational constraints.

- High-precision, occlusion-robust tool tracking: A combined optical and non-optical tracking pipeline that delivers reliable tool poses even under partial occlusion in the surgical field.

- Patient-pose-aware information update: Recognizing patient pose from RGB camera analysis and updating the surgical information layer accordingly.

- AR camera calibration using surgical tools: Using the tracked surgical tools themselves as calibration targets for the AR camera, simplifying the in-room calibration procedure.

- Effective AR visualization: Designing the on-view rendering of X-ray-derived surgical guidance so that it remains informative without overwhelming the surgeon’s perception.

programming experience

C++, Python, OpenCV, Graphics Engine APIs